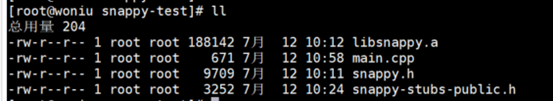

Even though there is an overlap in features used across these use cases, the total number of feature-value pairs exceeds billions.Īdditionally, since feature data is used in model serving, it needs to be backed up to disk to enable recovery in the event of a storage system failure. These entities are associated with features and used in many dozens of ML use cases such as store ranking and cart item recommendations. At DoorDash, our ML practitioners work with millions of entities such as consumers, merchants, and food items. The number of records in a feature store depends upon the number of entities involved and the number of ML use cases employed on these entities. Persistent scalable storage: support billions of records Let’s elaborate upon the requirements before we discuss the challenges faced when meeting these requirements specifically with respect to a feature store. The challenges of supporting a feature store that needs a large storage capacity and high read/write throughput are similar to the challenges of supporting any high-volume key-value store. Requirements of a gigascale feature store We will then dive into the optimizations we did on Redis to triple its capacity, while also uplifting read performance by choosing a custom serialization scheme around strings, protocol buffers, and Snappy compression algorithm. Then, we will review how we were able to quickly identify Redis as the right key-value store for this task. Additionally, we also saw a 38% decrease in Redis latencies, helping to improve the runtime performance of serving models.īelow, we will explain the challenges posed in the task of operating a large scale feature store. Our benchmarking results indicated that Redis was the best option, so we decided to optimize our feature storage mechanism, tripling our cost reduction. We ran a full-fledged benchmark evaluation on five different key-value stores to compare their cost and performance metrics. At DoorDash, our existing feature store was built on top of Redis, but had a lot of inefficiencies and came close to running out of capacity. A feature store, simply put, is a key-value store that makes this feature data available to models in production. The decisions made here can prevent overrunning cost budgets, compromising runtime performance during model inference, and curbing model deployment velocity.įeatures are the input variables fed to an ML model for inference. These challenges warrant a deeper look into selection and design of a feature store - the system responsible for storing and serving feature data. When a company with millions of consumers such as DoorDash builds machine learning (ML) models, the amount of feature data can grow to billions of records with millions actively retrieved during model inference under low latency constraints.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed